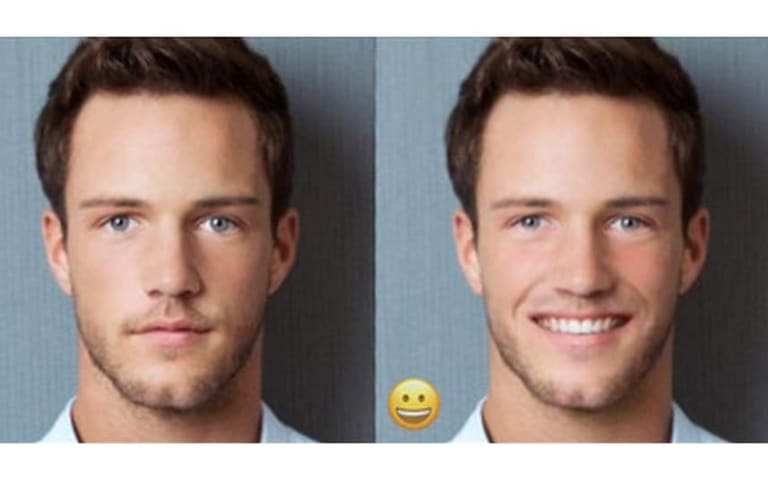

Description: A Twitter user reportedly modified using generative AI a short clip of Disney's 2022 version of "The Little Mermaid," replacing a Black actress with a white digital character.

Entities

View all entitiesAlleged: unknown developed an AI system deployed by @TenGazillioinIQ, which harmed Halle Bailey and Black actresses.

Incident Stats

Incident ID

351

Report Count

1

Incident Date

2022-09-13

Editors

Khoa Lam

Applied Taxonomies

CSETv1_Annotator-1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

351

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

maybe

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2022

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

09

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

12

Estimated Date

“Yes” if the data was estimated. “No” otherwise.

No

CSETv1_Annotator-3 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

351

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2022

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

09

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

12

Estimated Date

“Yes” if the data was estimated. “No” otherwise.

Yes

Incident Reports

Reports Timeline

nypost.com · 2022

- View the original report at its source

- View the report at the Internet Archive

Twitter decided two users could not be part of their world after an artificial intelligence scientist “whitewashed” actress Halle Bailey in “The Little Mermaid” trailer.

In addition to suspending their respective accounts, the AI guy has be…

Variants

A "variant" is an incident that shares the same causative factors, produces similar harms, and involves the same intelligent systems as a known AI incident. Rather than index variants as entirely separate incidents, we list variations of incidents under the first similar incident submitted to the database. Unlike other submission types to the incident database, variants are not required to have reporting in evidence external to the Incident Database. Learn more from the research paper.

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

FaceApp Racial Filters

· 23 reports

TayBot

· 28 reports

Images of Black People Labeled as Gorillas

· 24 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

FaceApp Racial Filters

· 23 reports

TayBot

· 28 reports

Images of Black People Labeled as Gorillas

· 24 reports